I'm learning about neural networks so I tried this: the goal is to train a neural network to perform the needed regressions to mimic an arbitrary image processing consisting of:

- Non linear RGB curves

- Colour desaturation

- Hue rotation

I did this kind of exercise in the past

with curves, but they only perform well in processings that can be done using curves (this excludes arbitrary desaturations and hue cycling).

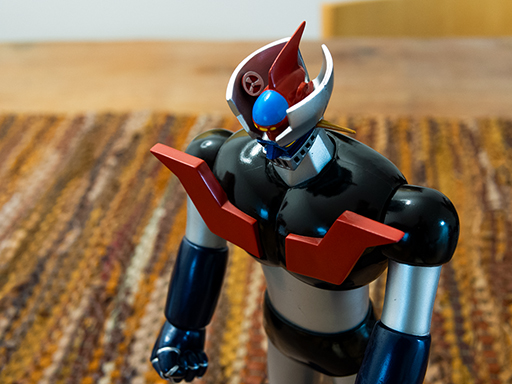

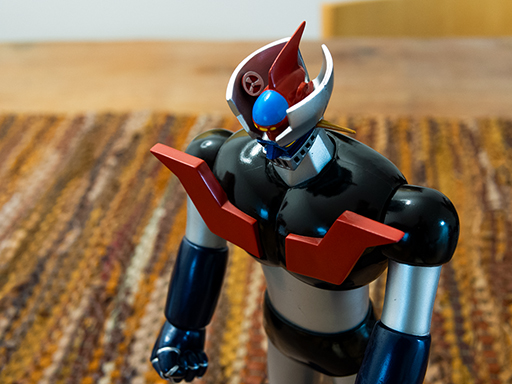

Our processing takes a picture like this...

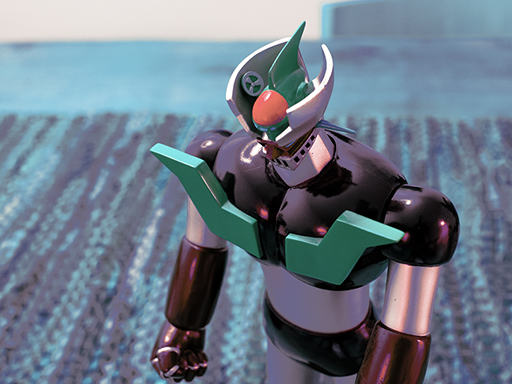

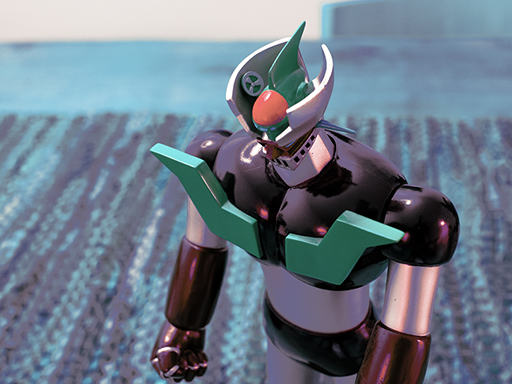

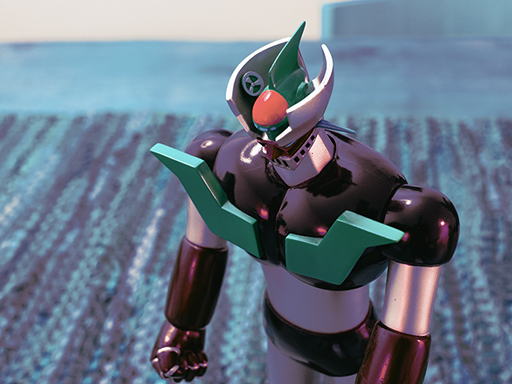

...and turns it into this (the ugly appearance is irrelevant, the point was to make things difficult for the NN):

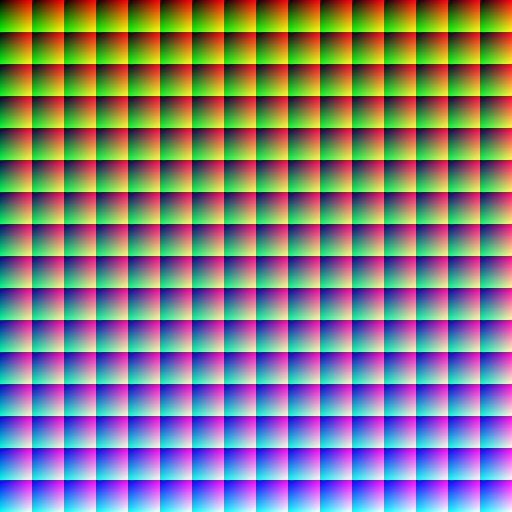

The process consists of teaching the neural network the transformation function from the input {R, G, B} to the every corresponding output {R', G', B'}. This is done via a synthetic 8-bit image containing all possible combinations: 256x256x256 = 17 million colours (borrowed from Bruce Lindbloom). This is called the

training set:

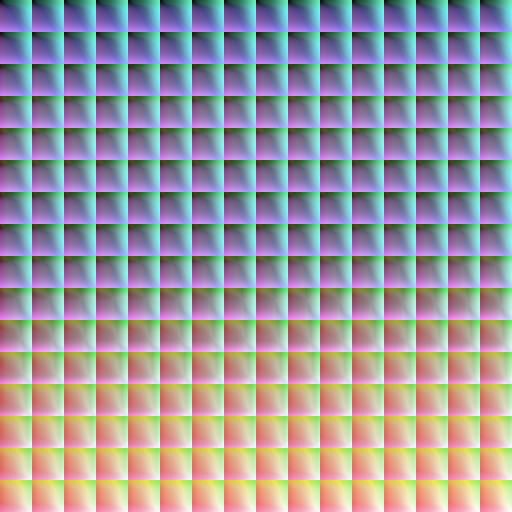

And I have applied in Photoshop the processing we want to model:

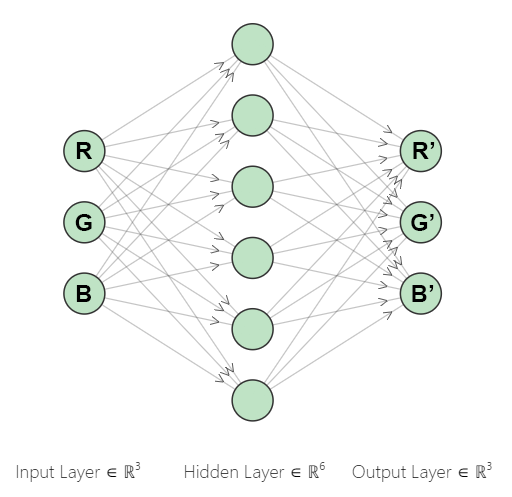

The NN doesn't really know it's dealing with image information. For the NN this is just a R3 -> R3 function regression problem. We train the NN with some adequate parameters (only one hidden layer was used, with 32 nodes):

# NN training hyperparameters

regr = MLPRegressor(solver='adam', # solver 'sgd', lbfgs'

alpha=0, # no L2 (ridge regression) regularization

hidden_layer_sizes=32, # nodes

activation='logistic', # hidden layer activation function (default 'relu')

# 'logistic' (sigmoid) seems more adequate to model continuous functions

max_iter=30, # max epochs

tol=0.00001, # tolerance for early stopping

n_iter_no_change=10, # number of epochs to check tol

verbose=True) # tell me a story

regr.out_activation_ = 'relu' # output layer activation function (default 'identity')

# 'relu' seems a good idea since RGB values can only be positive

This is what a 6 nodes NN looks like:

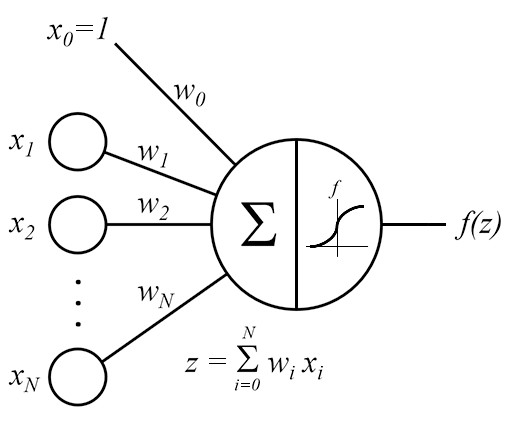

Once trained some ~200 coefficients are calculated. The NN definition through just 200 figures models up to 17 million possible colour transformations. While NN seem magic, in prediction they just apply this basic

sum + activation function on each node (the weights

wi are the NN definition commented):

The last step is to run the net through images that are unknown to it (the NN never "saw" them during training); this is the

test set. After that we compare the prediction with the exact processing applied in Photoshop. Results seem promising, I have to improve some things though (deep shadows and contrast take the worst part, but colour is very good). Just 2 examples:

Original image:

Exact processing:

NN prediction:

Original image:

Exact processing:

NN prediction:

Possible applications:

- Copy someone's processing when he doesn't want to share

- Camera JPEG replication from RAW (any JPEG style on any brand)

- Sensor calibration

- Reverse engineer cinema filters

- Reverse engineer Apps filters (Instagram, NIK,...)

- Mimic old film (Kodachrome, Velvia,...)

- Mimic chemical cross processings

Since NN are a form of supervised learning, they need the before and after image to be trained. This can be tricky to obtain in some cases (e.g. film, we'd need a chemically developed image and be able to repeat it again with a digital camera).

Regards