There is a persistent legend in the digital photography world that color space conversions cause color shifts and should be avoided unless absolutely necessary. Here's a quote from a recent post on LuLa.

Round trips between colorspaces lose both precision and accuracy, so the main thing is to avoid this. Profiles are necessary evils, but profile conversions really are horrible and should be kept to a minimum.

Although I'd not put it that strongly, I've had inklings of that attitude myself. In my case, it was based on old experience. Fifteen years ago, with 8-bits per color plane precision ruling the land, there were strong reasons for that way of thinking, but times have changed. I thought that another look was in order. I've spent the better part of the last two weeks working on this project, and have been surprised and pleased by what I found.

Here's the take-home lesson:

Accurately implemented model-based conversions among RGB color spaces with quantizing to 15 or 16 bit unsigned integer precision, and/or conversion from those spaces to CIEL*a*b* and CIEL*u*v* cause negligible errors.That's a strong statement, and it flies in the face of conventional wisdom. I expect that you'll demand proof, and I hope to supply it, although not all in the first post.

First, some caveats: The above statement only applies to images where all colors are within the gamut of the destination color space. It also may only apply to algorithmic conversions, not those performed through table lookup and interpolation. I haven't tested any table-based conversions.

Here's a third caveat, and one that bit me several times during this project: make sure that the color spaces are well-defined and implemented properly. I wasn't using ICC profile for some of my work, and used an image that was encoded in a color space with the sRGB primaries and white point, but with a gamma of 2.2, thinking it was encoded in the IEC 61966-2-1:1999 color space, which has a different nonlinearity. It took me several days to track down the problem.

Also, let me invite whoever's interested to replicate (or fail to replicate, and that's interesting, too) my results. If you PM me, I can send you my Matlab code, although it would be a better test if you didn't peek at it.

Let's start out with no integer quantization, with the color space conversion algorithms implemented in double precision floating point and the images stored in that representation. If we take the following 14 RGB color spaces:

IEC 61966-2-1:1999 sRGB

Adobe (1998) RGB

ProPhoto RGB

Joe Holmes’ Ektaspace PS5

SMPTE-C RGB

ColorMatch RGB

Don-4 RGB

Wide Gamut RGB

PAL/SECAM RGB

CIE RGB

Bruce RGB

Beta RGB

ECI RGB v2

NTSC RGB

(Take a look at

Bruce Lindbloom's web site for definitions of all of the above).

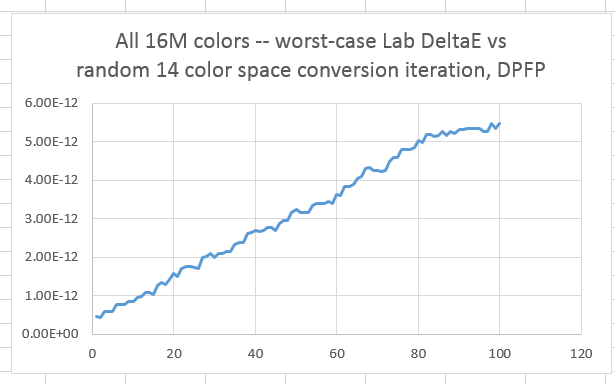

And we take as a test image all 16+ million colors that can be encoded in 8-bit sRGB with all colors mapped to a color set that falls within the gamut of all 14 color spaces, and perform chained (with processing of the white point performed using the calculations associated with what the ICC would call "relative colorimetric" intent) conversions from the current color space to one chosen at random (except that the current space is not allowed), and measure the difference in CIELab DeltaE of the pixel that is farthest from its value in the original image, we get the following curve:

The worst error, even after 100 iterations, is a few trillionths of a Lab DeltaE. The conversion algorithms themselves are quite accurate.

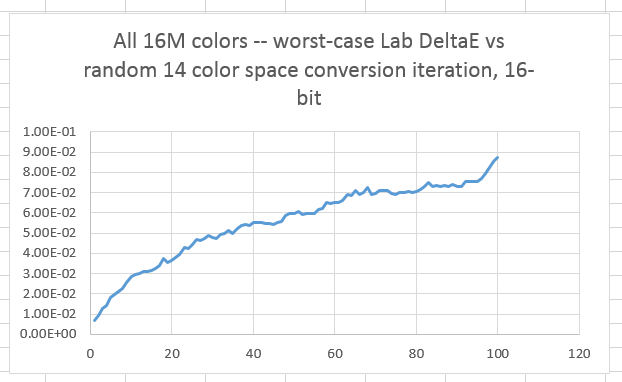

If we quantize to 16 bit unsigned integers after every conversion, we see this:

Now the worst-case error after 100 iterations is less than a tenth of a DeltaE.

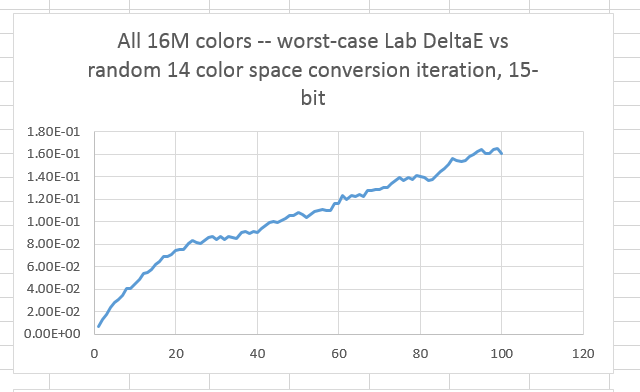

There are those who say that Photoshop processes images with 16-bit color depth using 16-bit precision.

Here are the worst case results with 15-bit quantization after every conversion:

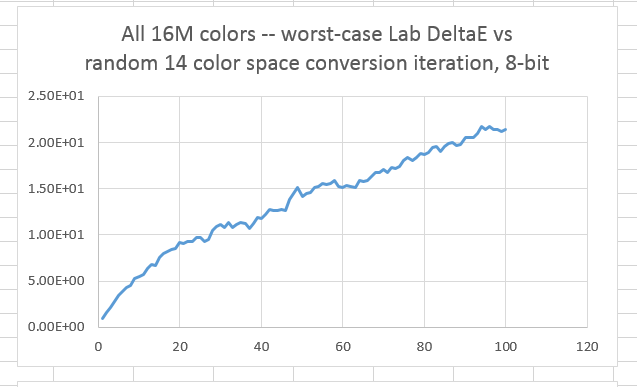

In the bad old days, we used 8-bit precision. What does this test do with that?

Not very good, huh?

There's a lot more to be said, and I'll be making some more posts. If you want more details on what's in this one, look here:

http://blog.kasson.com/?p=7517Thanks for reading this far. Comments and questions are solicited.

Jim