I woke up this morning with an idea: calibrating out pixel-response nonlinearity (PRNU). First off, what is it? It’s a kind of noise in digital camera systems. It’s not actual noise, since it’s predictable. It stems from the fact that all photosites in a given color plane of a digital camera don’t have the same sensitivity to photons. If a group of photosites on the sensor of a digital camera are all exposed to the same photon flux for the same length of time, some will read higher values than others. If you consider the systematic errors to be a noise signal, the amplitude of the PRNU signal varies directly as the number of photons hitting the photosite, and therefore PRNU is greatest in the highlight areas of an image.

My idea for calibrating it out was bog-simple: construct a map of the pixel sensitivities, invert it, and multiply the values in the raw image by the inverted map. In the dim, distant future, cameras might do that themselves, with the maps generated by the manufacturers and burned into the camera before it is shipped. In the medium term, raw developer coders could have you make a series of calibration images, and use them to correct each image from that camera as it’s “developed”.

Before I got too carried away with the idea, I thought I’d test a couple of cameras to discover the nature of PRNU. I made 256 exposures of a defocused white wall, first with the Nikon D4, and then with the Sony NEX-7. Those are the two cameras that I have handy that span the greatest range of photosite density.

Here’s how I got to 256 exposures. I figured that the PRNU and the shot noise at full scale might be comparable. If I wanted the PRNU data to be accurate, I should do enough averaging to reduce the shot noise by about a decimal order of magnitude. The square root of 256 is 8 [No, it's 16. Oops!], so that’s pretty close. Besides, I just couldn’t stomach the idea of making 1024 exposures. I did compute a corrected PRNU by subtracting the shot noise in quadrature, but it turned out to be a small correction; 256 images were enough. Whew!

I used the base ISO (100 on both cameras), and a shutter speed of 1/30 of a second at an aperture which gave me an exposure in the green channels of the raw images which was about one stop below clipping. I saved the still-mosaiced raw images as tif’s, and averaged all 256 image for each camera. Then I wrote a program to extract each color plane from the averaged images and compute the mean and standard deviation of the data in a 200x200 pixel (that's 10,000 pixels in each of the four color planes) central region of each color plane.

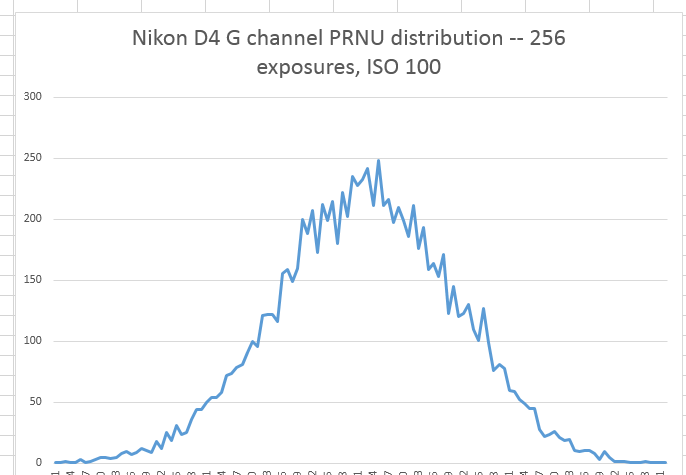

I looked at the histogram of the data and verified that the PRNU appears Gaussian:

I brought the statistics into Excel and computed the standard deviation of the PRNU as a percentage of the signal. Averaged over all four channels, the D4 PRNU was .29% of the signal and NEX-7 PRNU was .41%. I then calculated the shot noise, and extrapolated it to what it’s be for a nearly full scale signal. Then I calculated the ratio of the PRNU over the shot noise at full scale. For the D4 it’s 97%, and for the NEX-7 it’s 70%.

Then I got a whole lot less excited about this project. I don’t hear a lot of people complaining about noise in the highlight values of digital images. Even if the PRNU is the same as the shot noise, calibrating the PRNU out will only reduce the overall highlight noise to 70% of what it was (because shot noise and PRNU are uncorrelated, you can’t just subtract the numbers). Doesn’t seem like it’s worth the effort.

I was taught that negative results were as valuable as positive ones, so I’m posting this. Also, I could certainly have made some errors in my thinking, and I’d like anyone who sees a problem with what I’ve just written to let me know.