Hi Wayne,

Michael Reichmann has a very nice video with Ray Maxwell, discussing colour:

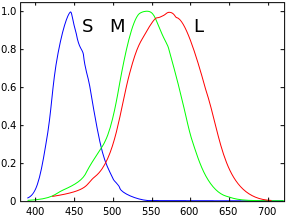

https://luminous-landscape.com/videos/luminous-landscape-video-journal-issue-17/interview-ray-maxwell-colour/The normalized colour response curve of the eye that Doug shows are quite correct. The reason they work as well as they do is that brain does a lot of processing. Would we use eye like curves in cameras we would need to math to separate red and green that would increase noise.

Spectral sensivity of human vision, according to Doug:

Spectral sensivity of human vision, according to Wikipedia:

The issue with Doug's article, or rather Phase One's presentation is that both state that traditional sensors work very differently than they do.

All spectral plots of sensors I have seen are very similar to what Phase One / Doug calls the new approach, but it is hard to compare curves as he gives no scales.

It has often been stated that CFA-s would been made more permissive to increase ISO. But, there is little proof that less overlap improve colour rendition, on the contrary, that may lead to a certain colour blindness, a sensor may have a tendency to slap colours together instead of separating them correctly.

The image below indicates that Nikon designs have changed very little over the years. Note that the diagrams are shown with red (low frequency) on the left, corresponding to rainbow colours.

There is in all probability an optimal combination, or a set of optimal combinations, of CFA center points and widths yielding correct rendition of colour.

This may be a very good sensor regarding colour, fulfilling the Luther-Ives condition. But, it is my understanding that Luther-Ives fulfilling CFA-designs are not practical.

Best regards

Erik

While I might agree that Doug’s plots over emphasized the red contamination, the spectral plots of many of the cameras posted indicate to me there is plenty of red contamination getting all the way into the blue and green sensels that perhaps cleaning this up and creating more consistent crossover would offer some advantage.

As to whether it can be corrected with custom profiles, perhaps it might be improved but perhaps most photographers really don’t want to get into the technical aspect of creating their own profiles, If the camera and CFA’s can be engineered to provide a better solution, why not? While the current price point doesn’t make sense for most, like anything new, if it’s good and helpful, it will trickle into other products over time most likely with far less impact on price.

In reviewing the article, I actually wonder if his two original graphs are labeled backwards, because he does state one is based on the eye, other on a sensor, but the way they are shown the graph that is typical of one used for the eye is labeled as a sensor and vice versa.