We are talking about the A7 II here. I have two reasons to think this posterization has nothing to do with the compression algorithm (which would just substract redundant information from the highlights), but with the fact that we are dealing with 12-bit linear data:

1. NUMBER OF FILLED LEVELS IN THE 12-BIT RAW FILESRAW histogram (portion of the whole range):

Just counting used levels in the whole 12-bit range, we find 4 zones in the decoded values:

- From 128 to 801 all levels used -> 674 levels (128 is the black level for this camera in 12-bit mode; it becomes 512 in 14-bit mode)

- From 802 to 1424 half the levels used -> (1424-802)/2=311 levels

- From 1427 to 2023 one out of each 4 used -> (2023-1427)/4=149 levels

- From 2029 to 4101 one out of each 8 used -> (4101-2029)/8=259 levels

Total number of used levels: 674+311+149+259=

1.393 levels (far from the maximium 4.096)

In terms of encoding efficiency, the A7II in 12-bit compressed mode is a log2(1393)=

10,44 bits camera.

In the shadows there seems to be no loss because of the compression, but we're still restricted to 12-bit linear density. This answers a question I made in the forum

some months ago:

"What I do not fully understand is that if one now develops this RAW file with DCRAW (gamma 1.0 output), levels spread in the final image in the same way as in the decoded RAW file, without any compression curve applied. This shocks me because given the high DR of this sensor, if the decoded values are already linear in a 12-bit range (from which only 10,44 bits are actually used), how can it avoid shadow posterization when lifting the shadows? and if they are not linear, how can the output from DCRAW not linearize them?."The answer is clear to me now that I have the camera: indeed

there is posterization (call it visible lack of levels if you wish), something I never expected the clever engineers from Sony would let happen.

Sony's 12-bit compression algorithm could be much more efficient (at CPU cost), becoming non-linear to put extra levels in the deep shadows substracting them from the highlights where there is still room for a reduction with no IQ penalty. The very efficient 8-bit compression algorithm in the Leica M8 does this kind of non-linear clever compression, managing to obtain all the information from its sensor with 8-bit non-linear RAW files (just 256 different levels).

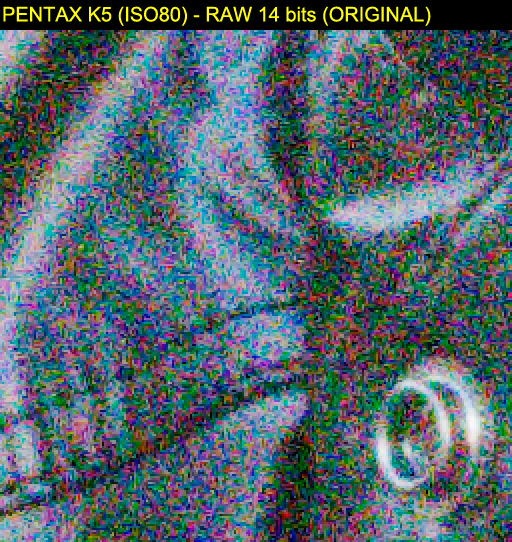

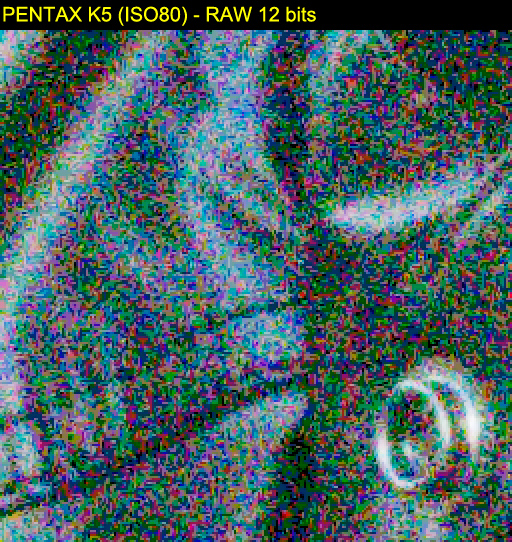

2. 12-BIT RAW DEVELOPMENT FROM 14-BIT UNCOMPRESSED RAW FILESTo explain the fallacy of 14-bit RAW files in some cameras (Canon 40D), and the real need of them in some others (Pentax K5), time ago I pushed 5-stops a RAW file from the Pentax K5 (Sony Sensor) decimating its 14-bits to 12-bit prior to demosaicing. This is the same as having a 12-bit uncompressed RAW file. The result was exactly the same kind of posterization:

14-bits regular RAW development:

12-bit RAW development:

The article is here:

DO RAW BITS MATTER?.

---

So my conclusion is that Sony's compression doesn't affect image quality, it is simply that 12 linear bits are not enough for the high dynamic range of these sensors since their debut. The compression just allows to make the files smaller, so maybe a compressed 14-bit format would be the best trade off:

- ISO100 12-bits compressed: 24MB

- ISO100 14-bit uncompressed: 48MB

- ISO3200 14-bit uncompressed: 48MB

If someone wants to play with the RAW files:

http://www.guillermoluijk.com/davidcoffin/Regards