Ted, I did a little quick simulation to help with the dither issue. I assumed a mean exposure of 51000 electrons with a fullscale of 77000 electrons on every pixel of a 1000x1000 monochromatic image, and assumed that all noise was photon noise.

Here's the Matlab code. All the images except the electron image are normalized to one. The histogram function plots the histogram between plus and minus two standard deviations of the mean at whatever resolution is specified )it un-normalizes prior to that operation):

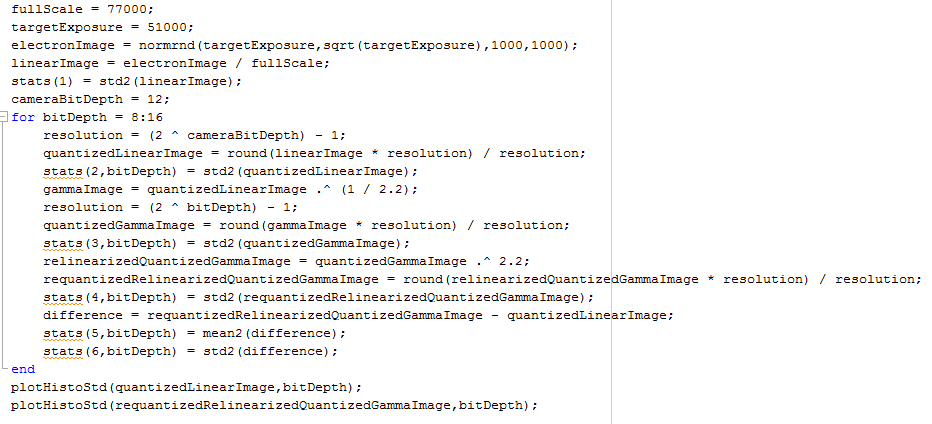

When you run it with the camera bit depth set to 16 and look at the 16 bit linear histogram, here's what you see:

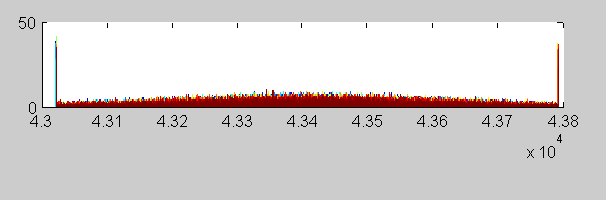

And here's the histogram of a round trip from 16 bit linear to 16 bit gamma 2.2, and back, quantizing at each step:

You can see that the histogram is more ragged, and that there is some depopulation, but you can also see that the photon noise dominates. The two peaks on either end are the result of big buckets there; please ignore them.

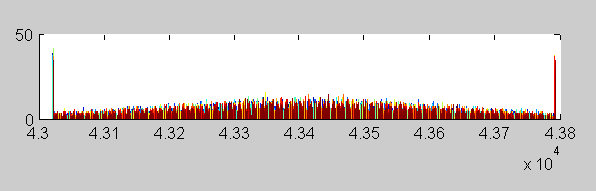

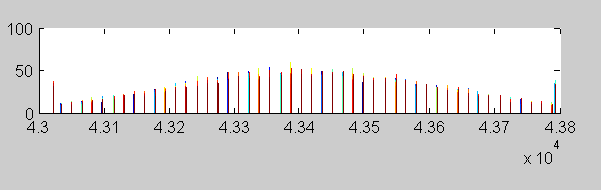

Now, what if we assume a 12-bit camera bit depth, and convert the image to 16-bit linear:

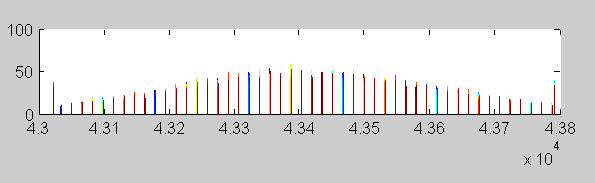

Taking it to 16 bit gamma 2.2 and back, we get:

You can see that the histogram isn't changed much, because the slope of the gamma compression curve never gets low enough that two of the twelve-bit buckets are conbined.

I've got statistics versus bit depth if you're interested, but I think this hits the high spots.

OBTW, I've a fan of dithering from way back:

https://www.google.com/patents/US4187466Thanks,

Jim