Hmm. While there is a slippery slope in there, there are some further considerations.

Regarding the question of whether digital imagery is really A/D and/or D/A converted, this discussion may be a slippery slope. One can for certain say that digital images are "digital". I believe it is fair to say that (quantum physics aside) visible light is, for all intents and purposes, "analog". Thus a visible scene is analog, so is a print or something viewed on your monitor. To capture a digital image (or render it), some kind of A/D or D/A operation is needed.

My point was that the spatial domain behaviour of a display device (let us assume monochrome for simplicity) can be compared to that of an audio D/A converter where the interpolation filter have been reduced to a crude box-car/sample&hold characteristic. This will lead to significant "imaging" (the D/A-equivalent of "aliasing").

Just like the single-bit oversampled audio converters, one might expect that displays/prints of very high spatial resolution, but reduced sample precision (e.g. 1 bit) controlled by noise-shaped dithering and digital resampling may be one way to achieve high quality when "proper" spatial-domain lowpass filtering is hard to do.

Consider that, in the case of the analog audio signal (or any such continuous function), that the digitized samples determine every single point, ad infinitum, of the input signal, as an analytical fact.

Audio A/D converters use real-world pre-filtering of finite delay and finite stop-band attenuation. Thus, they too will have aliasing in the passband, and the original waveform cannot be recreated at infinite precision (even ignoring the issue of lossy quantization). But since they may be able to suppress this error by e.g. 80 or 100dB, it is generally not a problem.

Having such long filters in digital imagery (thousands of taps) would be really expensive, and might introduce visible ringing that users object to (analogies between our vision and our hearing cannot be stretched very far). Physical spatial filtering of light is even worse :

camera sensors use a comb-filter:

http://www.ephotozine.com/article/wide-band-phase-retardation-film-olpf-6500

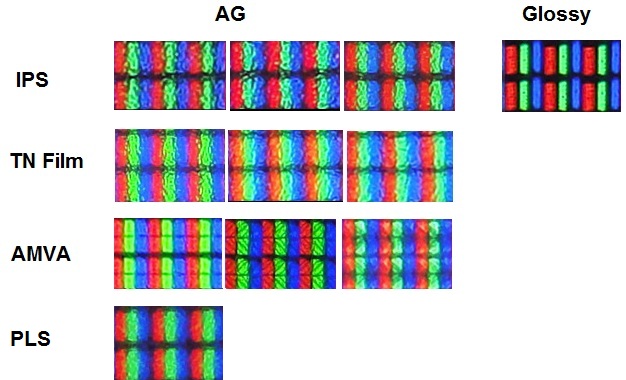

while monitors seems to use some "randomized" smearing:

http://www.tftcentral.co.uk/articles/panel_coating.htm

(it seems that this smearing is effective mostly at subpixel distances, and not so much at full-pixel distances)

I believe that if/when you operate a camera in such a way that the combined effect of scene detail, movement, diffraction, OLPF etc have significant (e.g. 48dB) attenuation at fs/2 and above (pessimistically for the red and blue channels for Bayer), then one might say that the scene was sampled in a Nyquistian fashion and can be recreated (within other limits, such as noise/saturation, color filtering etc). The same way with display: when the density of monitor sensels vs "pixel PSF", viewing distance, human visual acuity, content, digital filtering etc is so chosen, one might say that the image is spatially recreated in the Nyquist sense.

The "problem" seems to be that (many) photographers and customers does not really want this, rather, they want (in some sense) larger-than-life acuity that stretch the capabilities of current sampling densities. This makes it harder to apply the neat signal processing theory from e.g. audio to image problems. While in audio, you can generally make assumptions about what the samples "really" mean physically, and the move on to do number-crunching, in imagery, the samples does not always have this nice physical interpretation. Thus a good audio resampler can be characterized by a couple of decent measurements, while the choice of a good image resampler (to a larger degree) depends on the image content, display device and viewer preferences.

-h