Actually it's easier to interpolate the original color because each third line has as many greens, as blues and reds...

I don't think that you are making much sense here. We are talking about sparsely sampling a color scene using a CFA in front of the sensor that can only capture a single color channel at any one point. Bayer use a 2:1:1 distribution among green/red/blue while Fuji seems to use a 2.5:1:1 (slightly more green sensels). A simple (suboptimal) approach would be to use interpolation of the channels independently. In that case, Fuji has to cover larger "gaps" in the red and blue channels (2x2) than Bayer.

The CFA chosen by Fuji seems to be "quasi random". Or perhaps more generally, Bayer is "quasi-random" with a repeat pattern of 2x2. Fuji does "quasi-random" with a repeat pattern of 6x6. This makes it less likely that a particular color-contrast pattern will couple well with the CFA pattern, meaning that they can gamble on less AA filtering (for increased apparent sharpness).

I am guessing that _when_ one is unfortunate enough to shoot a scene that "resonate well" with the Fuji CFA, results can be quite bad?

If you wanted to guarantee that no aliasing would ever appear in any color channels (something that cannot practically be done), then the Fuji sensor would seem to demand an even lower (achromatic) spatial cutoff frequency than Bayer (1/4 the sensel pitch)

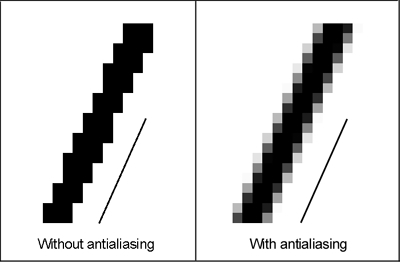

I wonder what is stopping them from making a truely randomized CFA pattern. Is it production cost or raw development processing cost? Once there is not pattern in the placement of red/green/blue, chances that some pattern in the scene would trigger a patterned artifact would be practically zero. You'd still see aliasing in the form of "unrealistically contrasty pixel values instead of a smoother edge-following function", but it seems that many photographers are perfectly happy with that.

-h