I see too much focus on discussing the gamma issue when it's something quite irrelevant that needs no decisions made from the photographer.

Very quickly the RAW development process involves:

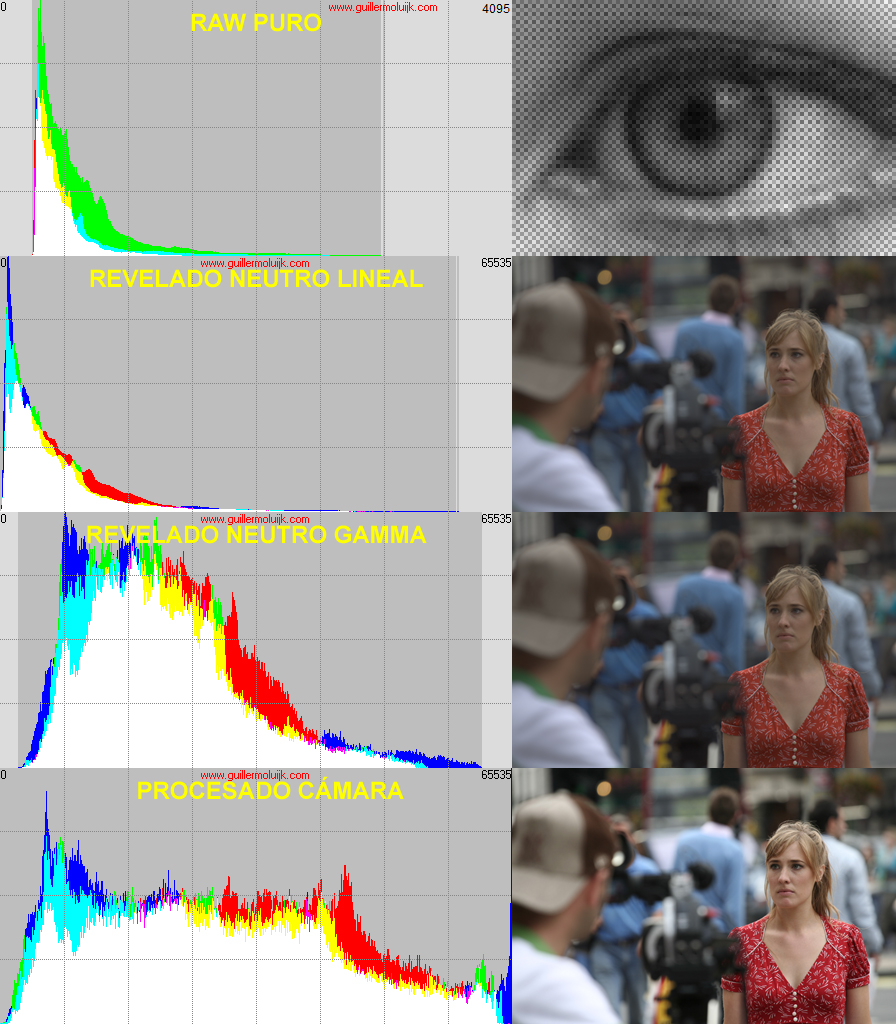

PURE RAW DEVELOPMENT

1. Decoding the RAW data (RGB values contained in the RAW file according to a propietary format), usually from a RGGB Bayer matrix.

2. Applying some linear corrections to the RAW data to prepare them for demosaicing (substracting the black point, adjusting the sat point to the max of the output scale)

3. White balance: in a more or less sophisticated way, WB is equivalent to an exposure adjustment (linear scaling) for each individual channel: R, G and B

4. Demosaicing: the missing RGB values are interpolated following a more or less sophisticated algorithm (AHD, Amaze,...). This part can be done before the White balance in some RAW developers.

5. Apply some shadow/highlight recovery, or inpaint strategies in RAW data clipped areas: these could be considered pp but since it is so mandatory to make critical decisions (specially about making the highlights compatible with the non-uniform RGB scaling WB means), any RAW developer, no matter how basic it is, has some abilities in this regard.

6. Depending on up to which degree the RAW developer listens to RAW metadata, other adjustments can be made to the image: clandestine exposure correction (baseline exposure), lens-dependant corrections (distortion, chromatic aberrations,...),...

7. Numerical conversion to a recognised/standard output colour profile (sRGB, Adobe RGB,...): means a more or less sophisticted matrix or LUT scaling adapted to every camera sensor. Calibrated camera profiling would mean taking the standard procedure one step further. This stage usually means applying the non-linear gamma scaling intrinsic to any chosen output profile (2,2 gamma for Adobe RGB, 1,8 gamma for ProPhoto RGB,...), which is quite irrelevant to the photographer.

IMAGE POST-PROCESSING

From the steps above, we would have a nearly standad neutral RAW development that should not differ too much in rendering whatever the software used (leaving aside metadata dependent corrections). But the resulting image would look flat, dull and lacking saturation and sharpness. Now is when the non-standard procedures take place that differ a lot from one software to another (this includes camera engines to build the output camera JPEG):

- Tone/contrast curve

- Satuation adjustments

- Noise reduction (probably ISO dependent)

- Sharpening (even if the user value is set to 0)

DCRAW is a very good learning tool to understand how the RAW development takes place. If you have some programming skills, looking at the source code is also a very good way to understand how it works (the very simple scale_colors() function performs steps 2 and 3 in a bunch of lines). This is how DCRAW responds to a custom RAW development request:

c:\dcraw>dcraw -v -w -o 2 -g 2.2 0 -H 2 -4 -T 1.ORF[-w: camera WB, -o 2: Adobe RGB output, -g 2.2 0: standard 2.2 gamma curve, -H 2: neutral and preserving highlight strategy]

Loading Olympus E-P5 image from 1.ORF ... [loading and decoding RAW data]

Scaling with darkness 255, saturation 4095, and [linear scaling to output range]

multipliers 1.000000 0.444444 0.649306 0.444444 [linear scaling white balance]

AHD interpolation... [AHD demosaicing]

Blending highlights... [highlight strategy]

Converting to Adobe RGB (1998) colorspace... [standard colour profile conversion]

Writing data to 1.tiff ...This is a simplified view of the process:

1. Pure RAW data

2. Linear neutral RAW development (camera WB, sRGB)

3. Same as 2 plus sRGB specific gamma curve

4. Camera JPEG obtained using tone curve and other adjustments

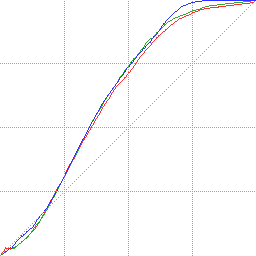

The translation from 3 to 4 corresponds to the following tone curve:

Regards